|

|

Post by stranger on Jan 27, 2010 2:31:08 GMT

Well, Steve certainly gave me my evening chuckle. Steve has never been struck by lightning - which after all is a product of solar energy. As are many of the other energies radiated from the Earth.

Yes, there is a sharp break, at the wavelength/frequency "waves" become "wavicles," in the energy of an individual photon. But that does not greatly change the amount of energy the Earth radiates in a given 50 Hertz, 50 megaHertz, or 50 gigaHertz slice of the electromagnetic spectrum.

One need only consider the difference in the amount of infrared and ultraviolet the Earth radiates to see the amount of energy declines with wavelength. As it does with energy emitted from stars, galaxies, and probably from anti-matter annihilation.

Not to mention the fact that infrared is radiated by literally everything, while terrestrial sources of ultraviolet is almost entirely a product of lightning like discharges.

Remember, you are talking (through your hat) about half the amount of energy radiated (what else?) by an ordinary 7 Watt night light distributed over a square meter of surface - and several tens of thousands of terahertz in terms of spectrum. It takes very little energy at any specific wavelength to add up to that small amount.

As far as "looking down" at the atmosphere, aside from the relatively narrow absorption bands, and clouds, the atmosphere is remarkably clear. If it were not those nice high definition satellite images would not be feasible. I suggest you consult an instrumentation specialist regarding what is and is not possible in that regard. You will be surprised.

And finally, remember that the atmosphere's infrared absorption does not stop energy's eventual radiation into space. One can rather quickly grasp how quickly the Earth could cool by spending just one night in a desert - with its 50C plus days and near 0C nights. Only water vapor prevents that.

And it would take roughly 120,000 ppm of CO2 to equal greenhouse effect of the atmosphere's normal concentration of CO2.

Stranger

|

|

|

|

Post by Ratty on Jan 27, 2010 5:05:41 GMT

Basic physics dictates that about 1 degree warming will occur from the radiative imbalance of doubling co2 if nothing else in the climate system changed except temperature. [Snip] I expect someone will dispute that .... soon.  |

|

|

|

Post by steve on Jan 27, 2010 9:31:36 GMT

steve writes "steve writes Ffive answers". None of which address the main question. Is the way radiative energy is transferred WITHIN the atmosphere affected by the other three modes of energy transfer? I agreed with you that it does." If you agree that the way radiative energy is transferred WITHIN the atmosphere is affected by the other modes of energy transfer, then what sense does it make to do calcualtions on radiative energy transfer, while ignoring the other modes of energy transfer? Or am I missing something? I've given you 5 reasons why the calculation is worth doing. You have said The answer is that the question is misposed. The other modes of energy transfer are not ignored. Some of my answers refer to how the calculations of radiative transfer are combined with calculations of transfer by other methods. If there was evidence that convection etc. was statistically unpredictable because it was hugely sensitive to temperature, then that would undermine the calculations for future warming, and thereby overwhelm the usefulness of the "forcing" metric. But the weather models that work reasonably effectively on a cold damp day in London are the same as used in a hot dry day in Alice Springs, and a stormy day in the Gulf of Mexico. And they work just as well on a slightly less cold damp day in London, a slightly hotter dry day in Alice Springs, and a slightly more uncomfortably humid stormy day in the Gulf of Mexico while using the same algorithms for modelling radiation, convection, evaporation, winds, etc. Therefore there is no particular reason to believe that they won't work about as well in 100 years time when the reason for the slight extra warmth is that the earth has been heated for a hundred years by a raised level of CO2. The tentative wording in the above is because models *aren't* good enough to exactly know how much warming there will be and it is possible that there *will* be an unexpected "tipping point" that throw the models off completely. But neither of those qualifications mean that it is not still useful to look at the forcing metric to answer some questions. |

|

|

|

Post by steve on Jan 27, 2010 9:49:01 GMT

Well, Steve certainly gave me my evening chuckle. Steve has never been struck by lightning - which after all is a product of solar energy. As are many of the other energies radiated from the Earth. Yes, there is a sharp break, at the wavelength/frequency "waves" become "wavicles," in the energy of an individual photon. But that does not greatly change the amount of energy the Earth radiates in a given 50 Hertz, 50 megaHertz, or 50 gigaHertz slice of the electromagnetic spectrum. One need only consider the difference in the amount of infrared and ultraviolet the Earth radiates to see the amount of energy declines with wavelength. As it does with energy emitted from stars, galaxies, and probably from anti-matter annihilation. Not to mention the fact that infrared is radiated by literally everything, while terrestrial sources of ultraviolet is almost entirely a product of lightning like discharges. Remember, you are talking (through your hat) about half the amount of energy radiated (what else?) by an ordinary 7 Watt night light distributed over a square meter of surface - and several tens of thousands of terahertz in terms of spectrum. It takes very little energy at any specific wavelength to add up to that small amount. I don't understand you. And you haven't understood me. It's clear we're talking on different wavelengths :-) You'll find I have made that point myself to people who insist that the whole IR spectrum is "completely saturated". That is why I stated that my illustration applied to the wavelengths at the edge of the CO2 emission lines (where the "greenhouse effect" is important). The net outgoing LW is a sum of emission from all the wavelengths. Reducing the radiation at one particular wavelength (in the way I illustrated) results in a net reduction in the total. It doesn't really matter if most of the energy is emitted in X-ray, UV or radio. A 2% reduction at 14 microns has relevance. And finally,. remember that the atmosphere's infrared absorption *slows* the rate of cooling of the earth - it does not stop it. Having spent a few nights in the desert and up mountains I'm well aware how quickly the earth can cool. But we are not talking about the *total* "greenhouse effect". We are talking about the *change* in the effect caused by an increase of CO2. If I give $10 to Warren Buffet he will probably be $10 richer than he would have been for the next few days. The comparison between CO2 and H20 is not so extreme, but we are still only talking about a 1-2% change in outgoing LW for a doubling of CO2. I'm not demanding the earth. |

|

|

|

Post by jimcripwell on Jan 27, 2010 11:49:00 GMT

steve writes "The answer is that the question is misposed. The other modes of energy transfer are not ignored. "

Please excuse my ignorance. Where in glc's calculations leading to the claim that doubling CO2 causes a 3.7 wm-2, or whatever, in radiative forcing, do we find anything about the other 3 modes of energy transfer? I have failed to find them at all.

|

|

|

|

Post by jimcripwell on Jan 27, 2010 11:50:31 GMT

Have I missed another reply to my musings from glc?

|

|

|

|

Post by steve on Jan 27, 2010 14:17:22 GMT

steve writes "The answer is that the question is misposed. The other modes of energy transfer are not ignored. " Please excuse my ignorance. Where in glc's calculations leading to the claim that doubling CO2 causes a 3.7 wm-2, or whatever, in radiative forcing, do we find anything about the other 3 modes of energy transfer? I have failed to find them at all. glc said this: So *for the moment* he is ignoring them. And *for the moment* I am considering them only briefly. |

|

|

|

Post by magellan on Jan 27, 2010 15:30:44 GMT

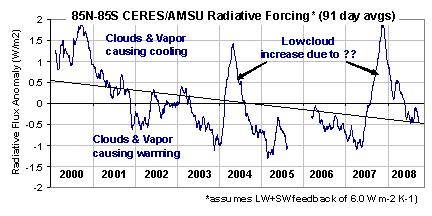

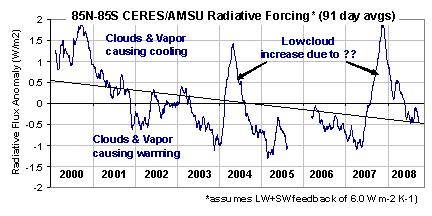

I have decided to refrain from these long drawn out debates and only comment on specific comments that only require presenting observational evidence to support it rather than getting sore eyes from reading the same worn out lectures. steve said: The extra water vapour that would be expected in a warmer world is thought to add to the warming induced by CO2. So where is the extra water vapor? Has absolute water vapor content been increasing in the upper troposphere? That's a yes or no answer. socold said: Yet even in that case it would mean doubling co2 causes a significant change in cloud cover, which is a climate change in itself. So knowing the imbalance of doubling co2 is a whopping 3.7wm-2 means a significant climate change is inevitable, whatever form it may take. You can't resolve the imbalance without the climate changing significantly. So now we're back to the "climate change" meme, avoiding the meat of the issue concerning CO2's role (which cannot be measured) in global temperatures. So, any change in atmospheric responses to CO2 is "climate change" and necessarily must also be bad? It's no wonder "global warming" has been replaced by the much more vague term "climate change". Once again, observational evidence, the bane of AGW proponents, which was at one time a prerequisite for the scientific method, is available and fortunately there are a few scientists interested enough to point it out. The 2007-2008 Global Cooling Event: Evidence for Clouds as the Cause Cloud Feedback Presentation for Fall 2009 AGU Meeting Cloud Feedback Presentation for Fall 2009 AGU Meetingmy bold “I am arguing that we can’t measure feedbacks the way people have been trying to do it,” he said. “The climate modelers see from satellite data that warm years have fewer clouds, then assume that the warmth caused the clouds to dissipate. If this is true, it would be positive feedback and could lead to strong global warming. This is the way their models are programmed to behave.

“My question to them was, ‘How do you know it wasn’t fewer clouds that caused the warm years, rather than the other way around?’ It turns out they didn’t know. They couldn’t answer that question.” No socold, climate model outputs are not a result of our current understanding of physics. They are outputs based on untested assumptions and ad hoc tuning to get the expected results. As I see it, surface temperatures are constantly overshooting above and below what could now be considered "equilibrium" (a misnomer) as it hasn't warmed for at least 10 years, and after 2010 it could be said it has neither cooled much when averaged out. The most sane argument is temperatures have peaked and remain relatively stabile, and the question still remains, where is the evidence that CO2 drives temperature? Now we must endure another year of warmology pronouncements AGW is back on track without ever considering ENSO being the dominant factor in these fluctuations, nor will January satellite "record" temperatures be evaluated for cause-and-effect (latent heat anyone?). Instead, it will be said it is all "consistent with" AGW theory. What I will say is 2010 will most certainly not exceed 1998, at least for satellite measurements based on historical telltales. Then as is nearly always the case, temperatures will plummet (negative slope) throughout the year ushering in the next La Nina wiping out El Nino gains then recharge (positive slope) the oceans and the cycle repeats. The net result will be zero change from the ten year average. The surface station values are not even worth discussing anymore; they are complete fabrications. My WAG (with error bars) for 2010 "global" temperature will be posted preferably after attaining the January MSU data. Anyone else care to take a stab? |

|

|

|

Post by steve on Jan 27, 2010 16:21:17 GMT

magellan,

Water vapour amounts and distribution seem quite variable, and strongly dependent on shorter term weather/climate. The record for some instruments is too short to show trends for certain. Here are a "Yes it is" and a "We're not sure" paper. I guess there will be a "No it isn't" paper or two up your sleeve.

|

|

|

|

Post by northsphinx on Jan 27, 2010 17:32:49 GMT

I am back to real measurements of outgoing radiation to space. It clearly show some very interesting "features" regarding radiation models. Since the atmospheric window is low unless in clear sky areas let us just do the math without that. In that case is all radiation into space from the atmosphere itself. Since the atmosphere radiates in all direction is outgoing in the same range as down going 240 W/m2. Total 480 W/m2 from the atmosphere itself. Seems to be in line with this langley.atmos.colostate.edu/publications/Documents_1976/Stephens_JAS_1976.pdfCooling of 2 degrees a day is about the same numbers 400-500 W/m2 That is far more than what earth itself CAN radiate as BB. So the atmosphere must gain this heat from some other source than radiation. That is "the other modes of energy transfer" Very critical in the the simplified radiative balance. Especially when the net radiation from earth to the atmosphere is in reality very low. Most heat is by convection and release of latent heat from the earth surface and not by radiation. But the strange things happened then if You increase the atmospheres possibility to radiate heat. That increase outgoing radiation to space! Do the math. Since incoming radiation is far less then outgoing due to smaller temperature differance with earth than with space. So more CO2 will cool the earth. Not the other way around. |

|

|

|

Post by hunterson on Jan 27, 2010 18:34:19 GMT

One of the fails of AGW theory is that it seems to imply that only CO2 will raise H2O and cause the fateful cascade of positive feedbacks.

Why CO2?

What about......nearly any other forcing?

|

|

|

|

Post by woodstove on Jan 27, 2010 21:54:26 GMT

Careful, we're considering co2's effect in a vacuum here. No feedback analysis permitted. As everyone who is someone knows, we're dealing with a non-chaotic, linear system as first explained by the great scientist St. Glevec.  |

|

|

|

Post by steve on Jan 28, 2010 11:01:00 GMT

One of the fails of AGW theory is that it seems to imply that only CO2 will raise H2O and cause the fateful cascade of positive feedbacks. Why CO2? What about......nearly any other forcing? It is wrong to state that this is a failure of AGW theory. The concept of "climate sensitivity" relates to any cause of warming/cooling, be it solar changes, albedo changes from different ice coverage, or greenhouse gas levels. eg. any cause of warming is expected to raise the average levels of water vapour. |

|

|

|

Post by steve on Jan 28, 2010 11:16:53 GMT

northsphinx,

You are getting there, I think.

Radiation from a given body of gas is calculated by the equation:

energy radiated = emissivity x Boltzmann constant x Temperature in Kelvin to the fourth power.

Emissivity is a function of the atmospheric composition, and is a function of wavelength.

An atmosphere with more CO2 will radiate more (emissivity is higher), but will also absorb more.

The increase in absorption means that on average, the radiation into space comes from a higher level in the atmosphere. (ie. a photon emitted at 4km up will have a slightly lower chance of escaping to space because of the slight increase in the number of CO2 molecules it has to get past)

Radiation from these higher levels is less because the temperature is lower (more than 99.9999% of radiation from a CO2 molecule in the atmosphere follows the excitation of the CO2 molecule through collision with another molecule. Lower temperatures result in fewer and less energetic collisions. Fewer than 0.00001% of emissions are due to the CO2 molecule being excited by interacting with a photon).

If the temperature of the atmosphere were the same all the way up, then there would be no warming from increased CO2. If it got warmer as you went up, there would be cooling due to increased CO2.

|

|

|

|

Post by glc on Jan 28, 2010 11:44:34 GMT

Now we must endure another year of warmology pronouncements AGW is back on track without ever considering ENSO being the dominant factor in these fluctuations, nor will January satellite "record" temperatures be evaluated for cause-and-effect (latent heat anyone?). Instead, it will be said it is all "consistent with" AGW theory

Of course ENSO is the dominant factor - over the short term . That's exactly the point I was making 18 months ago when I said everyone was reading too much into the falling temperatures during the 2007/08 La Nina. But, even allowing for ENSO, there is still a warming trend. The so-called cooling trend relies on using a recent El Nino year as a starting point, e.g. 1998 and 2002/03.

|

|