|

|

Post by nautonnier on Aug 30, 2016 14:16:55 GMT

I was thinking of going to Australia but I failed the mandatory Whinging course.

|

|

|

|

Post by missouriboy on Aug 30, 2016 16:48:07 GMT

It was an easy fight Missouri Yow ... even a blind man could hit those red Santa Claus suits.  Morgan's riflemen, with their Kentucky long rifles, got 3 volleys into them at Saratoga before they even got within musket range. |

|

|

|

Post by duwayne on Aug 30, 2016 20:58:07 GMT

Missouriboy, you said above that...."While this may well be true of the atmosphere, the fact that the mean temperature of the upper layers of the oceans have increased by about 0.7 C over the last 165 years suggests that there is a larger residence time associated with solar energy that is absorbed by the oceans."

Are you ruling CO2 out as a cause?

Here are numbers which I’m quoting from memory so beware.

The total solar energy delivered by the sun to the earth every hour of every day is about 240 watts per square meter averaged over the total earth’s surface.

The energy has varied between Solar Cycle Max and Solar Cycle Min over the past several solar cycles by only about 0.2%.

The estimated direct effect of a doubling of CO2 according to the experts is about 3.7 watts per square meter or 1.5% of the solar energy.

Fortunately for the same reasons I described in my earlier post, all this energy doesn’t stay in the system because the increase in global temperatures means more emitted photons.

The resulting temperature increase is estimated to be about 1C per doubling of CO2 according to the experts plus or minus any system feedbacks.

|

|

|

|

Post by missouriboy on Aug 30, 2016 22:17:32 GMT

Missouriboy, you said above that...."While this may well be true of the atmosphere, the fact that the mean temperature of the upper layers of the oceans have increased by about 0.7 C over the last 165 years suggests that there is a larger residence time associated with solar energy that is absorbed by the oceans." Are you ruling CO2 out as a cause? Here are numbers which I’m quoting from memory so beware. The total solar energy delivered by the sun to the earth every hour of every day is about 240 watts per square meter averaged over the total earth’s surface. The energy has varied between Solar Cycle Max and Solar Cycle Min over the past several solar cycles by only about 0.2%. The estimated direct effect of a doubling of CO2 according to the experts is about 3.7 watts per square meter or 1.5% of the solar energy. Fortunately for the same reasons I described in my earlier post, all this energy doesn’t stay in the system because the increase in global temperatures means more emitted photons. The resulting temperature increase is estimated to be about 1C per doubling of CO2 according to the experts plus or minus any system feedbacks. In the interest of scientific inquiry, I have attempted to peruse the literature for anything that could convince me that increasing CO2 can heat an oceanic water column. I have not found anything that I can swallow. Short wave radiation. from the ultra-violet through the green portions of the spectrum, appears to be provide the most effective energy transfer to the water column because of its depth of penetration. Changes in the UV portion of the solar spectrum seems to still be poorly understood, yet there are a couple of atmospheric windows in the UV, which in combination with cloud cover (the lack of), could provide major inputs to the oceans. As far as I can tell, longer-wave radiation in the infra-red, which is what the literature seems to claim that CO2 can re-radiate, doesn't do squat in terms of water heating (as far as I can tell). So yes, I've pretty much written that off unless something new (or old) emerges that convinces me otherwise. And all those photons still have to get back to the atmosphere before they can be emitted back to space. Anything absorbed by the water column would seem to be out of range until it is released back to the atmosphere, which, of course, is what warms the northern latitudes. As far as I can tell, energy release to the atmosphere is also poorly quantified. But, I don't see any way for the oceans to warm without retaining more heat, and that heat comes from shorter-wave radiation (or heat from below?). If, on average, the oceans warm, then it stands to reason, that more energy is being absorbed than is being released. And I haven't found anything to convince me that CO2 can accomplish that. |

|

|

|

Post by sigurdur on Aug 30, 2016 23:53:23 GMT

I read a paper, and hopefully posted it, in regards to CO2 warming the oceans. The instruments that recorded the temperature/penetration were made for that exact purpose.

The results showed that higher LW radiation accelerated the cooling. The LW hit the water, a few microns in and actually caused it to rise quickly, but it evaporated faster than it could transfer that heat TO the water.

From a pure physics point, the results made total sense. SW radiation penetrates BEFORE and WHILE it is dispersing its energy. LW just can't get more than a few microns in, and all the energy is spent.

The results "troubled" the scientists who were doing the measurements. At first they believed it was calibration error, etc, etc. They finally came to the conclusion of what the observation indicated. But it was a hard pill to swallow for them.

|

|

|

|

Post by Ratty on Aug 31, 2016 0:23:37 GMT

I was thinking of going to Australia but I failed the mandatory Whinging course. Shame. I was getting the spare room ready ...... |

|

|

|

Post by missouriboy on Aug 31, 2016 0:27:07 GMT

I read a paper, and hopefully posted it, in regards to CO2 warming the oceans. The instruments that recorded the temperature/penetration were made for that exact purpose. The results showed that higher LW radiation accelerated the cooling. The LW hit the water, a few microns in and actually caused it to rise quickly, but it evaporated faster than it could transfer that heat TO the water. From a pure physics point, the results made total sense. SW radiation penetrates BEFORE and WHILE it is dispersing its energy. LW just can't get more than a few microns in, and all the energy is spent. The results "troubled" the scientists who were doing the measurements. At first they believed it was calibration error, etc, etc. They finally came to the conclusion of what the observation indicated. But it was a hard pill to swallow for them. It always is when you have your heart (or wallet) dependent on an outcome.  I must look that paper up. |

|

|

|

Post by sigurdur on Aug 31, 2016 1:17:40 GMT

There is a feller from Chicago who thinks CO2 radiation warms the oceans. He claims that the wave action captures the warmed water before it evaporates. The ole curl technique?

To really make a difference, using that process, there would have to be one hawl of a lot of white water. And on the oceans, there really just isn't that much white water.

There are waves, but the skin of the water isn't broken most of the time.

I think his name was/is Pieere or some such.

|

|

|

|

Post by walnut on Aug 31, 2016 2:37:33 GMT

Yeah, that sounds crazy.

|

|

|

|

Post by missouriboy on Aug 31, 2016 4:53:58 GMT

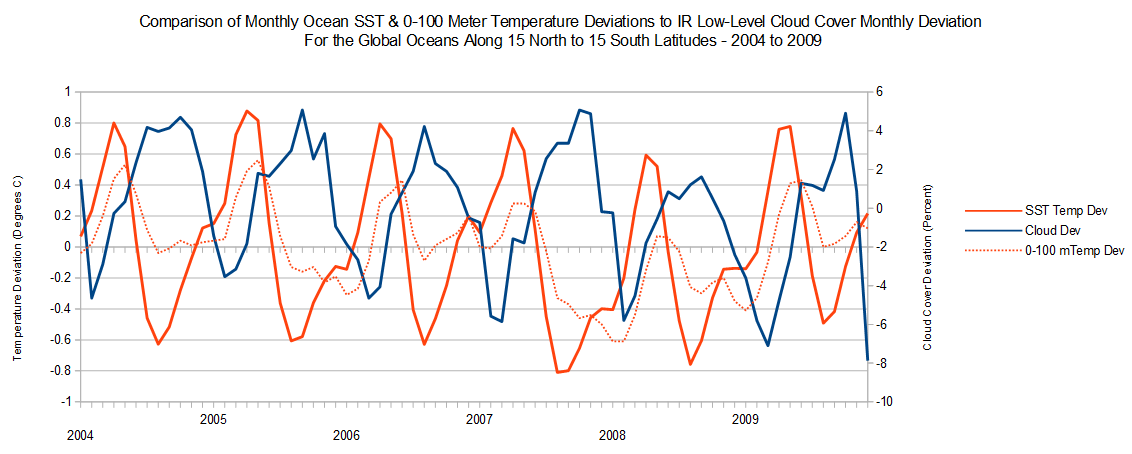

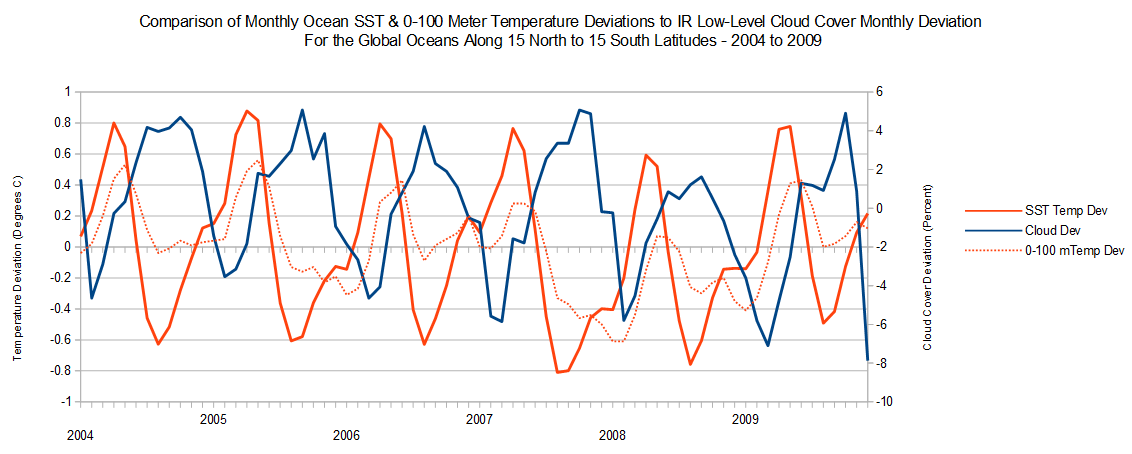

I posted this graphic before, but I dug it out again. These are mean ARGO temperature deviations by month for the World oceans between 15 N and 15 S latitudes. It is compared to mean cloud cover deviations (in %) for the same area. I originally used it to show the large difference in temp deviations that occur as cloud cover goes down. However, it occurred to me that, since these years span solar minimum between SC23 and SC24, it might show changes in energy being received from the sun as you progress to solar minimum. So you tell me what you think. If you create a ratio of SST temp Dev. to Cloud deviation for periods of lowest cloud cover (hence largest solar gain for the oceans) you are essentially normalizing temperature gain by unit of negative cloud deviation. While I did not re-plot this graph with those values, the progression of the SST ratio from 2004 to 2008 declines from about 0.17 deg. per one percent of cloud cover decline to about 0.1 deg. per one percent of cloud cover decline. In other words, at solar minimum there is 0.07 deg less heat being added to the SST value for the same unit of cloud cover. For temperatures averaged over 0-100 meters this ratio goes from about 0.14 C to 0.02 C for a decline of 0.12 C. So ... IF (note the capitalized and bold type) cloud cover is a good proxy for atmospheric water vapor and other gases that might absorb and reflect incoming solar radiation, then it would appear that, for this case, averaged for a 30-degree band of the worlds oceans, normalized by cloud cover percentage, solar energy inputs to the ocean at solar cycle minimum is potentially a lot lower than four years closer to solar maximum. Of course, verifying this would require a lot more samples (you knew that was coming).   |

|

|

|

Post by nautonnier on Aug 31, 2016 7:06:30 GMT

What that graphic shows me is the amount of heat that can leave the top 100 meters in a very short period of time. It shows that an increase in cloudiness could easily lead to a global temperature drop.

|

|

|

|

Post by nonentropic on Aug 31, 2016 10:30:43 GMT

Incoming energy would thus change rather than just being some fixed surplus over re radiation, do the models include this in any way?

The tropics rule the worlds weather and WUWT has many papers discussing the effective terminal topical temperature. Ultimately the clouds determine the peak air temp and the cloud is modulated to an extent by solar activity. This is the frontier for research the poles are just in on the ride.

If you look at the Antarctic nobody really knows if the ice extent is impacted positively or negatively by a world temperature rise, additionally GW has show that it is the wind which determines the Ice extent in the arctic. Many years ago Kiwi used to say there is only so much cold to go round if one area warmed or cooled implying that another area would reflect that change.

MB you have the bones of an important piece of work but don't expect a flood of funding, just saying.

|

|

|

|

Post by missouriboy on Aug 31, 2016 12:21:49 GMT

Incoming energy would thus change rather than just being some fixed surplus over re radiation, do the models include this in any way? The tropics rule the worlds weather and WUWT has many papers discussing the effective terminal topical temperature. Ultimately the clouds determine the peak air temp and the cloud is modulated to an extent by solar activity. This is the frontier for research the poles are just in on the ride. If you look at the Antarctic nobody really knows if the ice extent is impacted positively or negatively by a world temperature rise, additionally GW has show that it is the wind which determines the Ice extent in the arctic. Many years ago Kiwi used to say there is only so much cold to go round if one area warmed or cooled implying that another area would reflect that change. MB you have the bones of an important piece of work but don't expect a flood of funding, just saying. Story of my life Nonentropic. I do it all for love.  |

|

|

|

Post by duwayne on Aug 31, 2016 18:19:43 GMT

I posted this graphic before, but I dug it out again. These are mean ARGO temperature deviations by month for the World oceans between 15 N and 15 S latitudes. It is compared to mean cloud cover deviations (in %) for the same area. I originally used it to show the large difference in temp deviations that occur as cloud cover goes down. However, it occurred to me that, since these years span solar minimum between SC23 and SC24, it might show changes in energy being received from the sun as you progress to solar minimum. So you tell me what you think. If you create a ratio of SST temp Dev. to Cloud deviation for periods of lowest cloud cover (hence largest solar gain for the oceans) you are essentially normalizing temperature gain by unit of negative cloud deviation. While I did not re-plot this graph with those values, the progression of the SST ratio from 2004 to 2008 declines from about 0.17 deg. per one percent of cloud cover decline to about 0.1 deg. per one percent of cloud cover decline. In other words, at solar minimum there is 0.07 deg less heat being added to the SST value for the same unit of cloud cover. For temperatures averaged over 0-100 meters this ratio goes from about 0.14 C to 0.02 C for a decline of 0.12 C. So ... IF (note the capitalized and bold type) cloud cover is a good proxy for atmospheric water vapor and other gases that might absorb and reflect incoming solar radiation, then it would appear that, for this case, averaged for a 30-degree band of the worlds oceans, normalized by cloud cover percentage, solar energy inputs to the ocean at solar cycle minimum is potentially a lot lower than four years closer to solar maximum. Of course, verifying this would require a lot more samples (you knew that was coming).   Some comments…. Roy Spencer says the current global temperature of about 14C would be much lower, about -20C, without the warming effect of greenhouse gases. But he says the average global temperature would change even more and be far too hot, 60C, without clouds and weather. Clouds have a big impact on global temperatures. Willis Eschenbach (Spelling?) in a post on Watt’s Up With That some time ago found essentially no 11-year Solar Cycle signal in the global temperature data. This would suggest there might not be much change in cloud cover over the cycle or if there is it might even counter the effect of the solar insolation on warming. Several years ago, Svensmark published a paper which showed a strong correlation between cosmic rays and cloud cover. However, the end of the period he studied and in the subsequent years after his initial finding was issued, the correlation did not hold. He continues to study the issue and he has reported some promising new findings but his conclusions remain questionable. |

|

|

|

Post by sigurdur on Aug 31, 2016 18:25:09 GMT

At least Svensmark is looking outside of the box. Have to give him credit for that. Does his cosmic ray theory hold water? I am not sure it does or doesn't. There could very well be another source of cloud seeding that combines with cosmic rays that hasn't been investigated, or found yet.

The more I learn, I realize the little I know.

|

|